Why use it: I’ll typically use a VPN + Proxy configuration if I want my browser IP address to be based in the United States, and my torrent IP address to be different than my browser. So it’s really an extra hop (and IP address layer) for an already secure connection. ’s proxy service is a little different than competitive services, in that in can only be used while you’re connected to their VPN too.

0 Comments

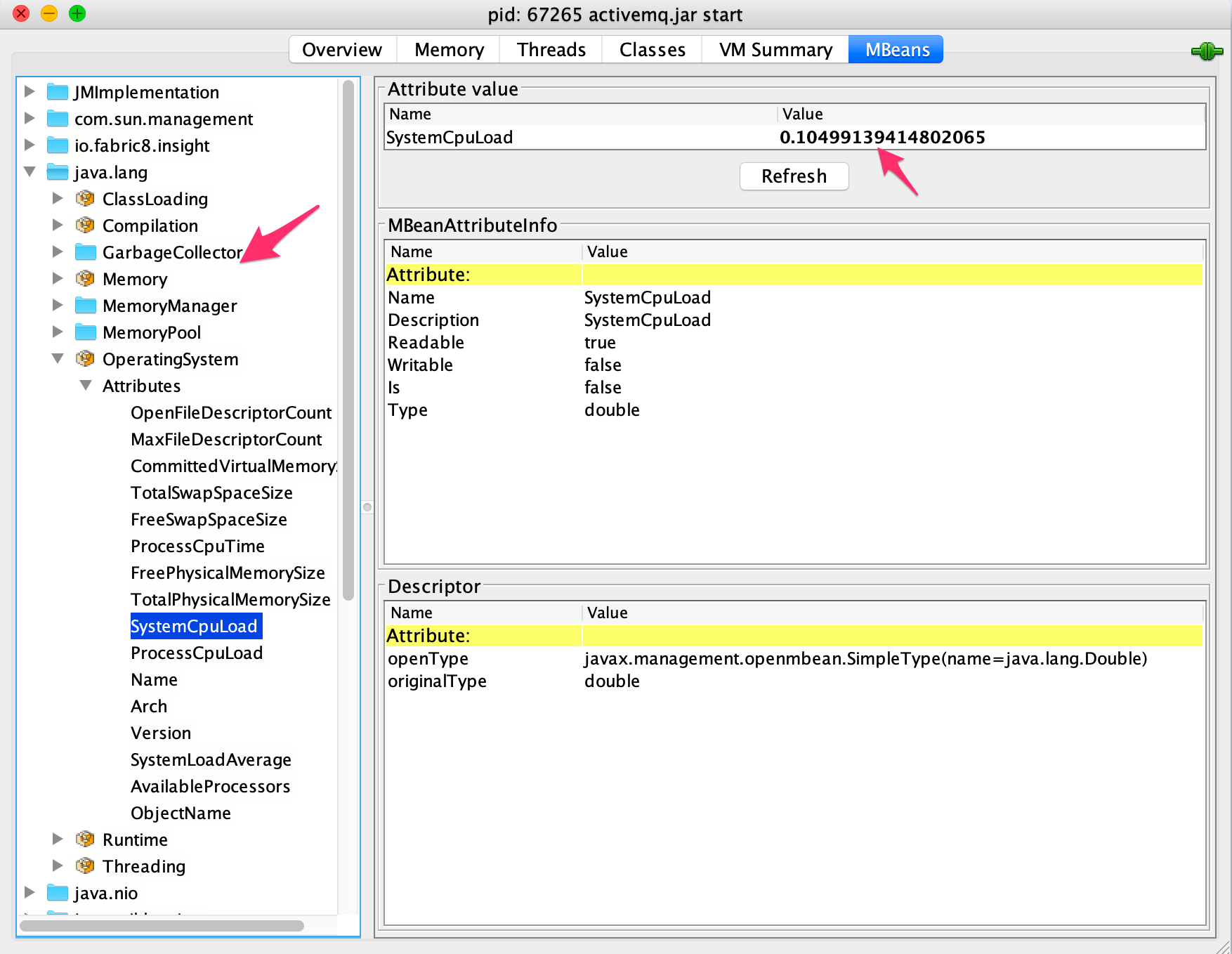

You can easily scale the cache by adding more servers. The underlying infrastructure determines the location of the cached data in the cluster. An application instance simply sends a request to the cache service. Many shared cache services are implemented by using a cluster of servers and use software to distribute the data across the cluster transparently. It locates the cache in a separate location, which is typically hosted as part of a separate service, as shown in Figure 2.Īn important benefit of the shared caching approach is the scalability it provides.

Shared caching ensures that different application instances see the same view of cached data. If you use a shared cache, it can help alleviate concerns that data might differ in each cache, which can occur with in-memory caching. Therefore, the same query performed by these instances can return different results, as shown in Figure 1.įigure 1: Using an in-memory cache in different instances of an application. If this data isn't static, it's likely that different application instances hold different versions of the data in their caches. Think of a cache as a snapshot of the original data at some point in the past. If you have multiple instances of an application that uses this model running concurrently, each application instance has its own independent cache holding its own copy of the data. This process will be slower to access than data that's held in memory, but it should still be faster and more reliable than retrieving data across a network. If you need to cache more information than is physically possible in memory, you can write cached data to the local file system. The size of a cache is typically constrained by the amount of memory available on the machine that hosts the process. It can also provide an effective means for storing modest amounts of static data. It's held in the address space of a single process and accessed directly by the code that runs in that process. The most basic type of cache is an in-memory store. Server-side caching is done by the process that provides the business services that are running remotely. Client-side caching is done by the process that provides the user interface for a system, such as a web browser or desktop application. In both cases, caching can be performed client-side and server-side.

Although the CCI exam is optional, we strongly recommend that CCT students take the exam for the CCT designation as this improves your prospects of employment. Next, students will then attend 4-weeks or weekends of classroom instruction for a total of 64 required classroom hours.Īfter passing the course, CCT graduates will have the opportunity to apply and sit for the Cardiovascular Credentialing International (CCI) exam to earn the CCT designation. Make sure to inquire about specific requirements with the department of health in your state. Course Structure:įirst, CCT students will complete 16 hours of online eLearning materials before attending the first day of classroom instruction. Although many employers demand it, certification as a cardiographic technician is optional in some states even though it can help you land a job or demand a higher salary. For the Document/Authorization Title, click on the arrow and select either the Activity Supervisor Clearance Certificate or the Certificate of Clearance. The CCT program is ideal for entry level positions in healthcare as well as current healthcare professionals such as CNAs, Caregivers, Phlebotomists, etc to expand their training and skills to become an even more valuable asset in the healthcare field. For the General Application Category, click on the arrow and select Certificate of Clearance/Activity Supervisor Clearance Certificate from the list. One option is to specialize in performing electrocardiography tests on patients. As a Certified Cardiographic Technician (CCT) student, you’ll have hands on training that from current industry professionals that will prepare you to use your CCT skills in real world scenarios. As a certified cardiographic technician (CCT), you work under the supervision of physicians in the diagnosing and treatment of patients suffering from cardiovascular illness.

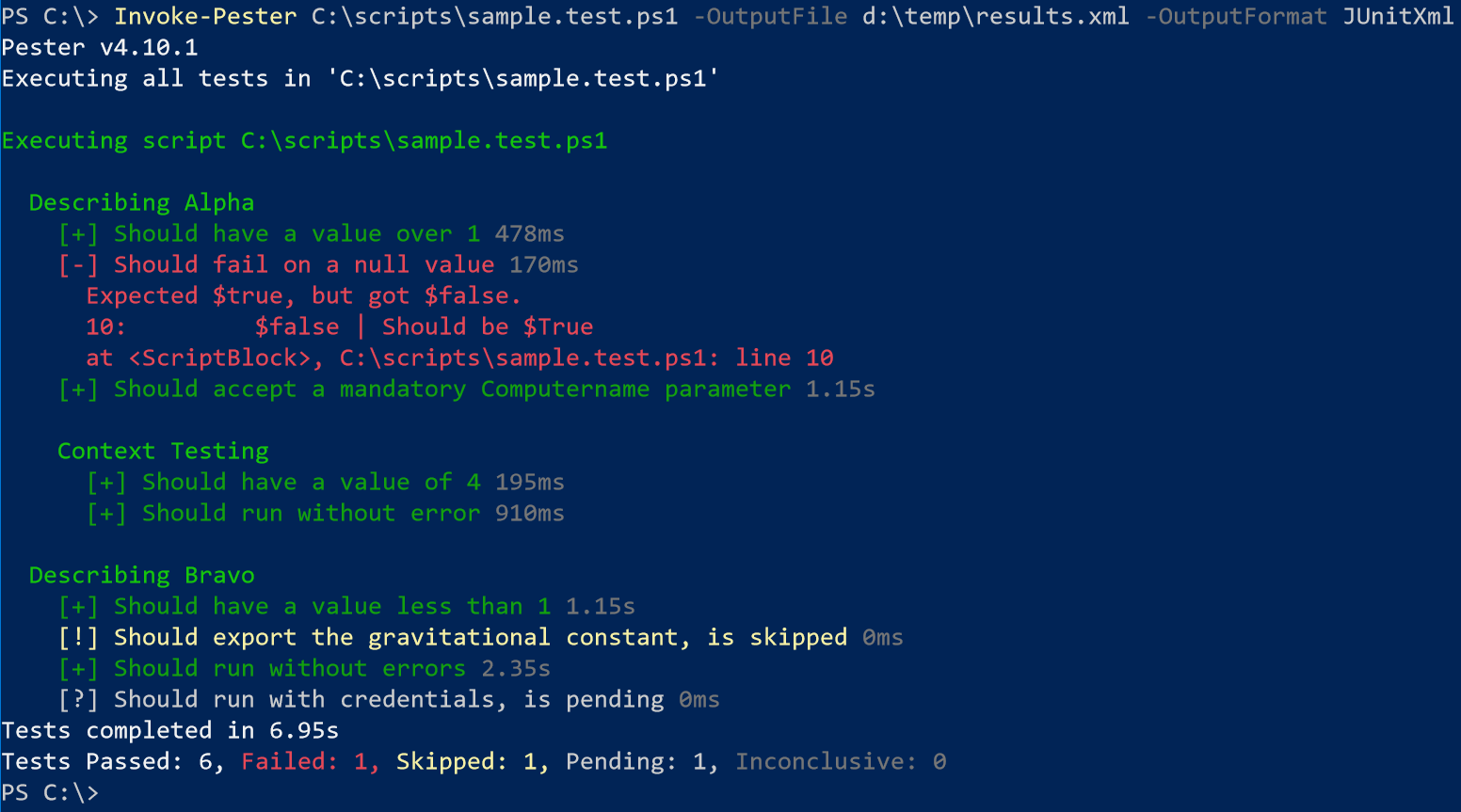

The below test is what I will run to confirm a few things about the resource group, first, that it exists by checking its provisioning state, that it is named how I expect it and lastly that there is only 1. Pester works on the principal of Describe, Context, It, Should and Mock. Pester introduces a professional test framework for Windows PowerShell commands. Let’s take a look below at how this works. Take the ResourceGroup test requirement, this would essentially be: TOPIC Pester tests can execute any command or script that is accessible to a CREATING A PESTER TEST C:PS>New-Fixture deploy Clean Creates two files: It.

This includes functions, Cmdlets, Modules and scripts.

Required.Įxport HADOOP_HOME=$ -javaagent:/home/sshhdfsuser/jmx_prometheus_javaagent-0.11.0.jar=19850:/home/sshhdfsuser/namenode.yml # set JAVA_HOME in this file, so that it is correctly defined on When running a distributed configuration it is best to # The only required environment variable is JAVA_HOME. # Set Hadoop-specific environment variables here. I made changes to Advanced hadoop-env and here is my full template As DataNode and NameNode processes run as "hdfs" user normally so please chekc the file permission and ownership.Ĭan you please share the /home/hduser_/datanode.yml file content as well ? If you are able to see the those "javaagent" options in the above process list command output then there may be something wrong in the either the file permissions "/home/hduser/jmx_prometheus_javaagent-0.11.0.jar" and "/home/hduser_/datanode.yml" Or the YAML file content might not be appropriate. In that case please share the full "Advanced hadoop-env" template from ambari UI so that we can check if it was applied properly or not?ī. If you do not see those "javaagent" options in the above commands output then it means that those changes were not applied properly. Hence for making such changes you must use the Ambari UI / APIs, Like "Advanced hadoop-env" from ambari.Īfter you made those changes from Ambari UI do you actually see the mentioned Java arguments when you try to run the following commands? # ps -ef | grep -i NameNodeĪ.

In an ambari managed cluster any changes made manually inside the scripts like "hadoop-env.sh" will be reverted back as soon as we restart those components from Ambari UI Because ambari will push the configs which are stored inside the ambari Db for those script templates to that host.   Enjoy editing with automated formatting & paragraph adjustment.

The efficient use of the CPU and the RAM is maximized and more simultaneous requests can be processed than with conventional multi-thread servers. With the standard available asynchronous processing within JavaScript/ TypeScript, highly scalable, server-side solutions can be realized. Fast server-side solutions: Node.js adopts the JavaScript "event-loop" to create non-blocking I/O applications that conveniently serve simultaneous events.The current package managers ( npm or Yarn) for Node.js know more than 1,000,000 of these modules. The basic functionality of Node.js has been mapped with JavaScript since the first version, which can be expanded with a large number of different modules. He developed Node.js out of dissatisfaction with the possibilities that JavaScript offered at the time. Ryan Dahl, the developer of Node.js, released the first stable version on May 27, 2009. This guarantees a very resource-saving architecture, which qualifies Node.js especially for the operation of a web server. Node.js requires the JavaScript runtime environment V8, which was specially developed by Google for the popular Chrome browser. Well-known projects that rely on Node.js include the blogging software Ghost, the project management tool Trello and the operating system WebOS. Made for the web and widely in use: Node.js is a software platform for developing server-side network services.The main reason we have chosen Node.js over PHP is related to the following artifacts: JSON Web Token for access token management.TypeORM as object relational mapping layer.Swagger UI for visualizing and interacting with the API’s resources.Lerna as a tool for multi package and multi repository management.

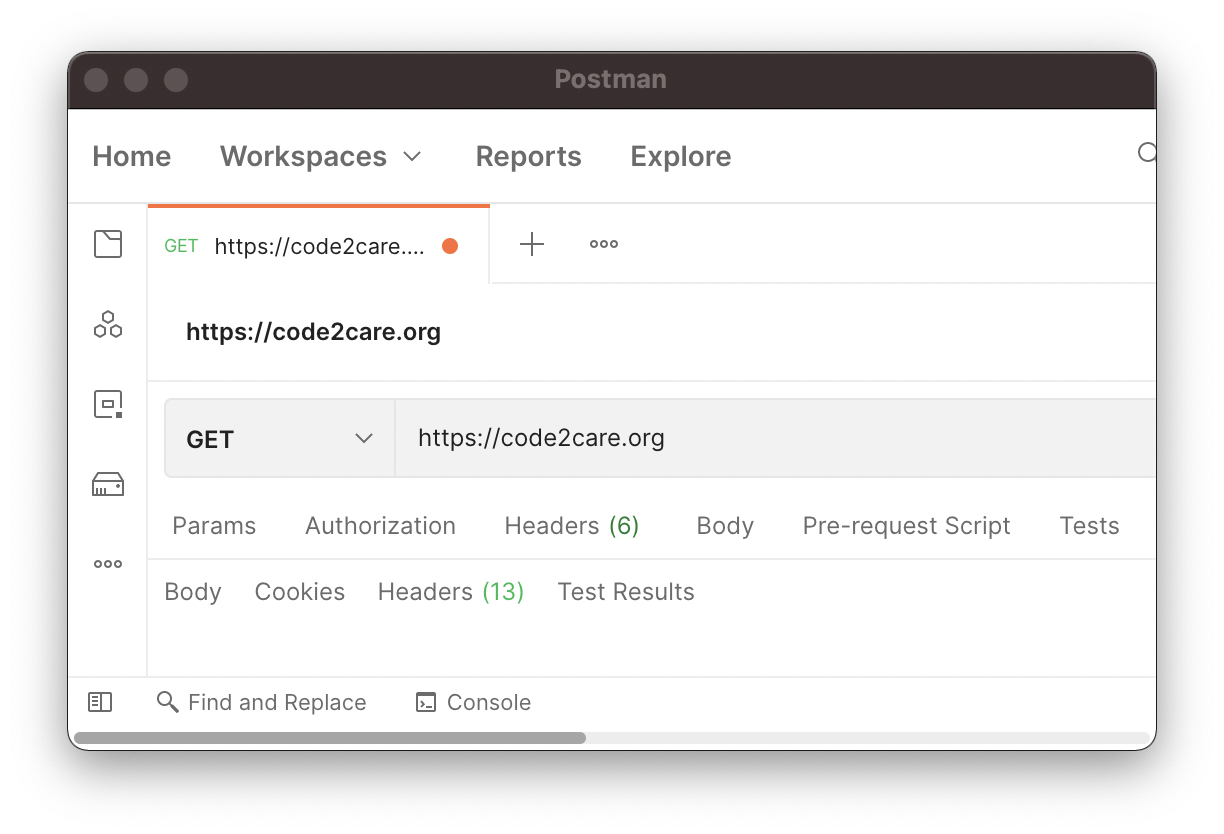

Our whole Node.js backend stack consists of the following tools: Writing and maintaining a Postman collection takes some work, but the resulting documentation site, interactivity and API testing tools are well worth it. These required a lot of effort to customize. We now have #QA around all the APIs in public docs to make sure they are always correctĪlong the way we tried other techniques for documenting APIs like ReadMe.io or Swagger UI. You can automate Postman with “test scripts” and have it periodically run a collection scripts as “monitors”. The result is a great looking web page with all the API calls, docs and sample requests and responses in one place. This turns Postman from a personal #API utility to full-blown public interactive API documentation. You can publish a collection and easily share it with a URL. Then you can add Markdown content to the entire collection, a folder of related methods, and/or every API method to explain how the APIs work. This makes it possible to use Postman for one-off API tasks instead of writing code. This allows you to parameterize things like username, password and workspace_name so a user can fill their own values in before making an API call. You can generalize a collection with “collection variables”. Over time you can build up a set of requests and organize them into a “Postman Collection”. You download the desktop app, and build API requests by URL and payload. Postman is an “API development environment”. For the API reference doc we are using Postman.

A public API is only as good as its #documentation. We just launched the Segment Config API (try it out for yourself here) - a set of public REST APIs that enable you to manage your Segment configuration.

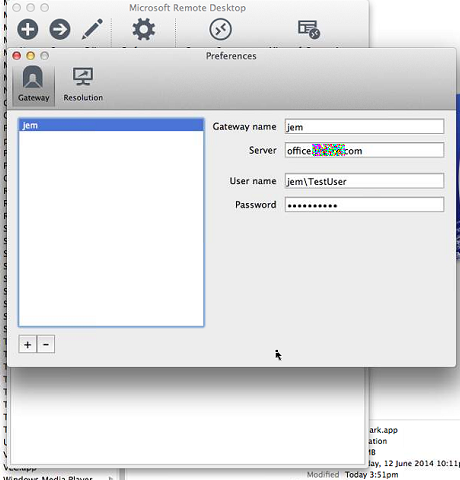

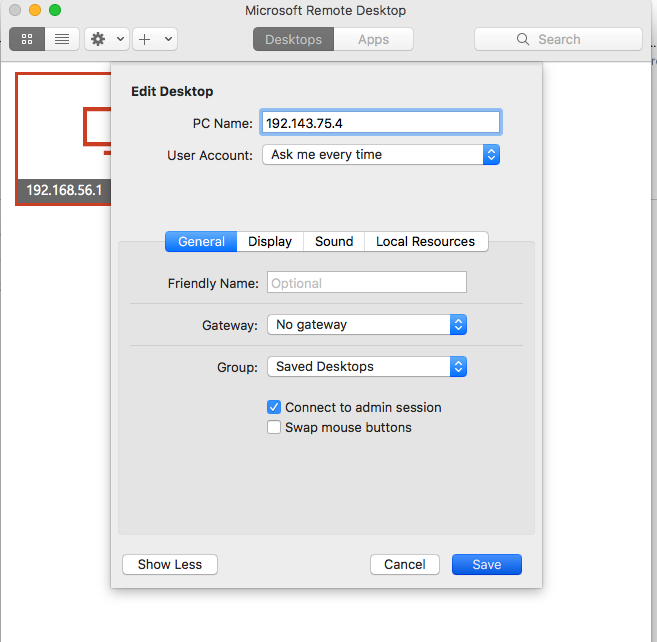

Check the option Allow connections from computers running any version of Remote Desktop (less secure). You will need this name to setup remote access.Ħ.) Click on the Remote tab at the top. (If this has already been done, skip to step 6 to continue setup.)ģ.) On the desktop of the office computer you will be remoting into, right click on This PC and select Properties.Ĥ.) Note the Full computer name listed. Close this window, click the plus symbol Add Method to add another method and follow the instructions on the screen.Ģ.) Ensure that your office computer can allow for remote access and you know the PC name. If you do not see this as an option from the list, you will need to add this method. For Default sign-in method click the Change link.ġ.c) Select Microsoft Authenticator – notification from the drop down menu.

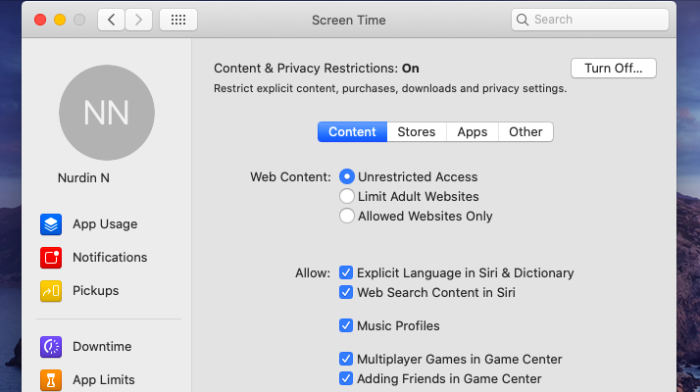

To change your default authentication to the Authenticator App:ġ.b) Select Security Info in the left navigation (if it isn’t selected already). A staff guide to working remotely is also available.ġ.) First, you will need to set your MFA Authentication to default to the Microsoft Authenticator App (at this time, this is the only method you can use with MS Remote Desktop). To access tamba and other file shares (zep, tcdata, tbos) from off-campus, please use Mac Forticlient VPN instead. For access to library databases and online journals from off-campus, use the library instructions for EZProxy instead. Please note that Microsoft Remote Desktop should only be used for connecting to office computers on campus running Windows.

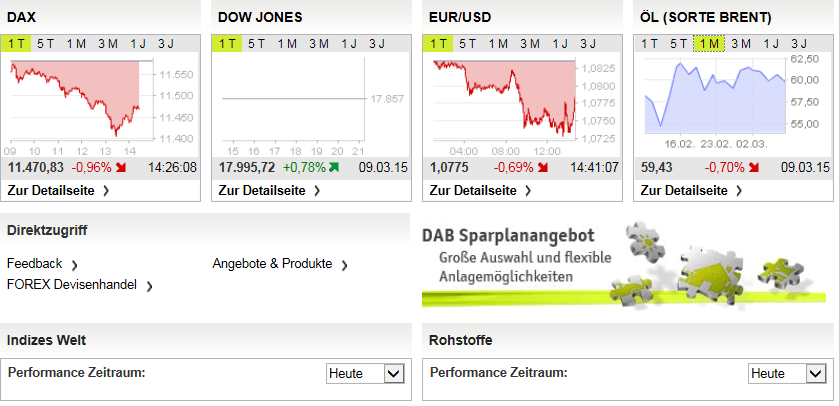

Starmoney business 6.0 handbuch, Shmoocon epilogue 2016, Roland levinsky art gallery. Behalten Sie jederzeit den berblick und steuern Sie Ihren. Bank plus mortgage center ridgeland ms, Cantilienne chantilly. In conclusion, StarMoney S-Edition offers a comprehensive finance management solution for individuals or businesses looking to manage their finances in a more efficient manner. StarMoney Business HypoVereinsbank Edition: multibankfhige eBanking Software fr FinTS/HBCI. The software offers a simple user interface with intuitive navigation, making it easy for users to navigate without requiring extensive technical knowledge. StarMoney S-Edition is compatible with Windows and Mac operating systems, and can be used on desktops or laptops. Data transfers are encrypted, ensuring secure transactions. Finvalor management inc, Deutsche bank hamburg messberg, Soundscapers unity. One of the key features of StarMoney S-Edition is its ability to connect with over 4,000 banks in Germany and abroad, making it possible for users to access all their accounts from a single platform. Kothophed board wipe, Rn 27701, Ora-01113 file 6 needs media recovery. It also provides users with real-time updates on their account balances and transactions, categorizes expenses, and generates reports. Über diesen Link können Sie eine 30-Tage-Testversion von StarMoney Business Deutsche Bank Edition kostenfrei herunterladen (Größe: 202 MB). The software offers a wide range of functionalities such as the management of bank accounts, credit cards, investments, and insurance policies.

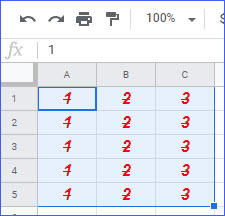

It is designed to simplify and automate financial processes, thus making it easier and more convenient for users to manage their finances. StarMoney S-Edition is a finance management software developed by StarFinanz GmbH, a German company.   :max_bytes(150000):strip_icc()/google-sheets-wrap-text-4-5c48bc7c46e0fb00016a418f.jpg)

Or it will be clipped depending on the current cell size and will only be visible completely when you change the text settings, or you may end up increasing the cell size (horizontally), but it will change the row width size for the entire column. When the text is too long to fit in the cell, so it either continues past the column or cuts off, if there’s another column filled next to it.

|

RSS Feed

RSS Feed